OpenAI

Route OpenAI model calls through Respan Gateway and track requests.

Set up Respan

- Sign up — Create an account at platform.respan.ai

- Create an API key — Generate one on the API keys page

- Add credits or a provider key — Add credits on the Credits page or connect your own provider key on the Integrations page

Use AI

Add the Docs MCP to your AI coding tool to get help building with Respan. No API key needed.

This section is for Respan LLM gateway users.

Use Respan Gateway to call OpenAI models while keeping unified observability (logs, cost, latency, and reliability metrics) in Respan.

Prerequisites

- A Respan API key

- An OpenAI API key (BYOK)

Supported SDKs / integrations

✅ Supported Frameworks

❌ Unsupported Frameworks

Configuration

There are 2 ways to add your OpenAI credentials to your requests:

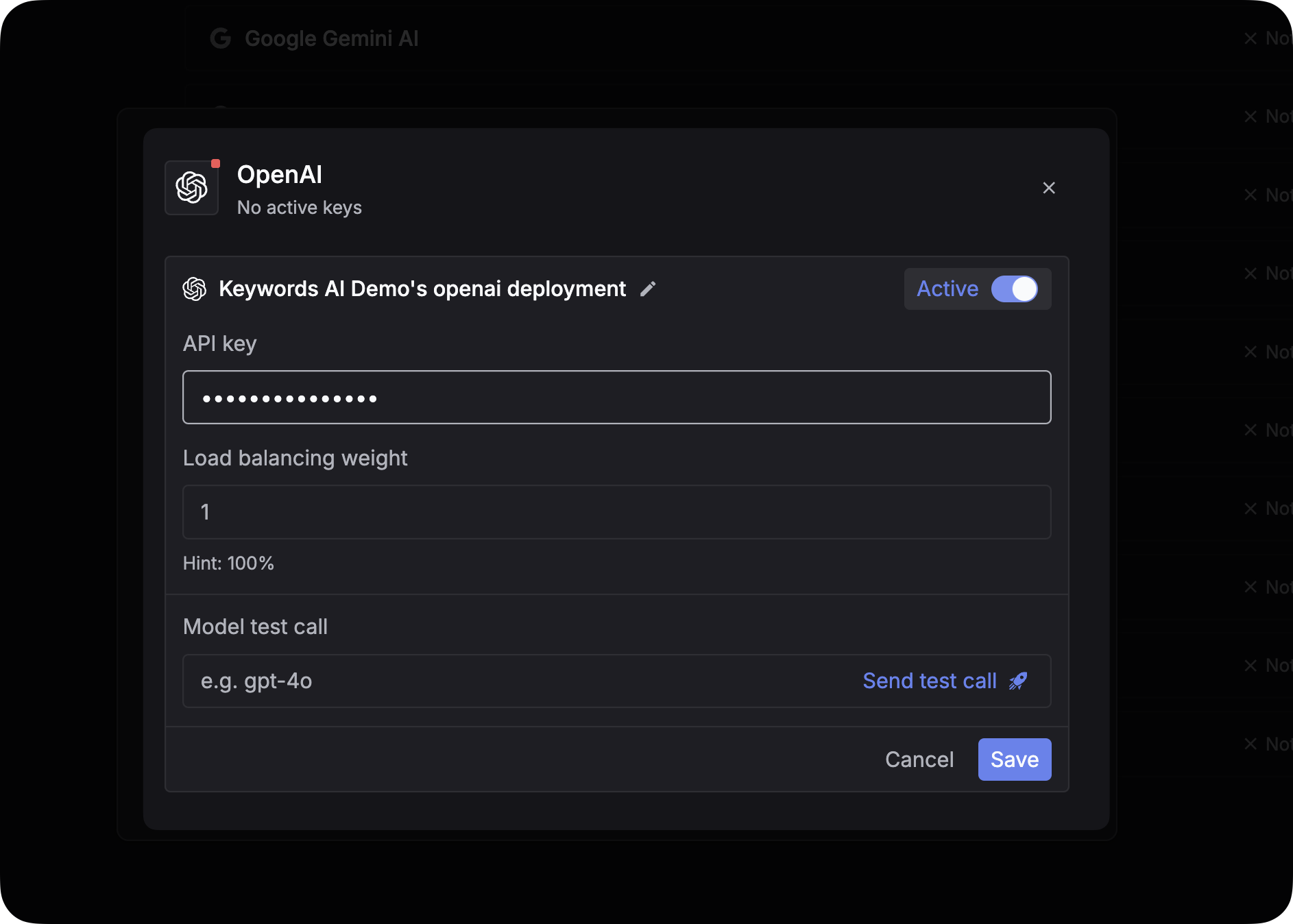

Via UI (Global)

Via code (Per-Request)

You can pass credentials dynamically in the request body. This is useful if you need to use your users’ own API keys (BYOK).

Add the customer_credentials parameter to your Gateway request:

Log OpenAI requests

If you are not using the Gateway to proxy requests, you can still log your OpenAI requests to Respan asynchronously. This allows you to track cost, latency, and performance metrics for external calls.

OpenAI Python SDK