AI agents are becoming real software.

They plan, route, call tools, write to memory, and act autonomously over many steps. But the way most teams manage agent quality hasn't kept up. Logs, dashboards, and offline evals worked for single-turn LLMs. They break down once agents start making decisions that compound.

Respan exists because seeing what happened is no longer enough.

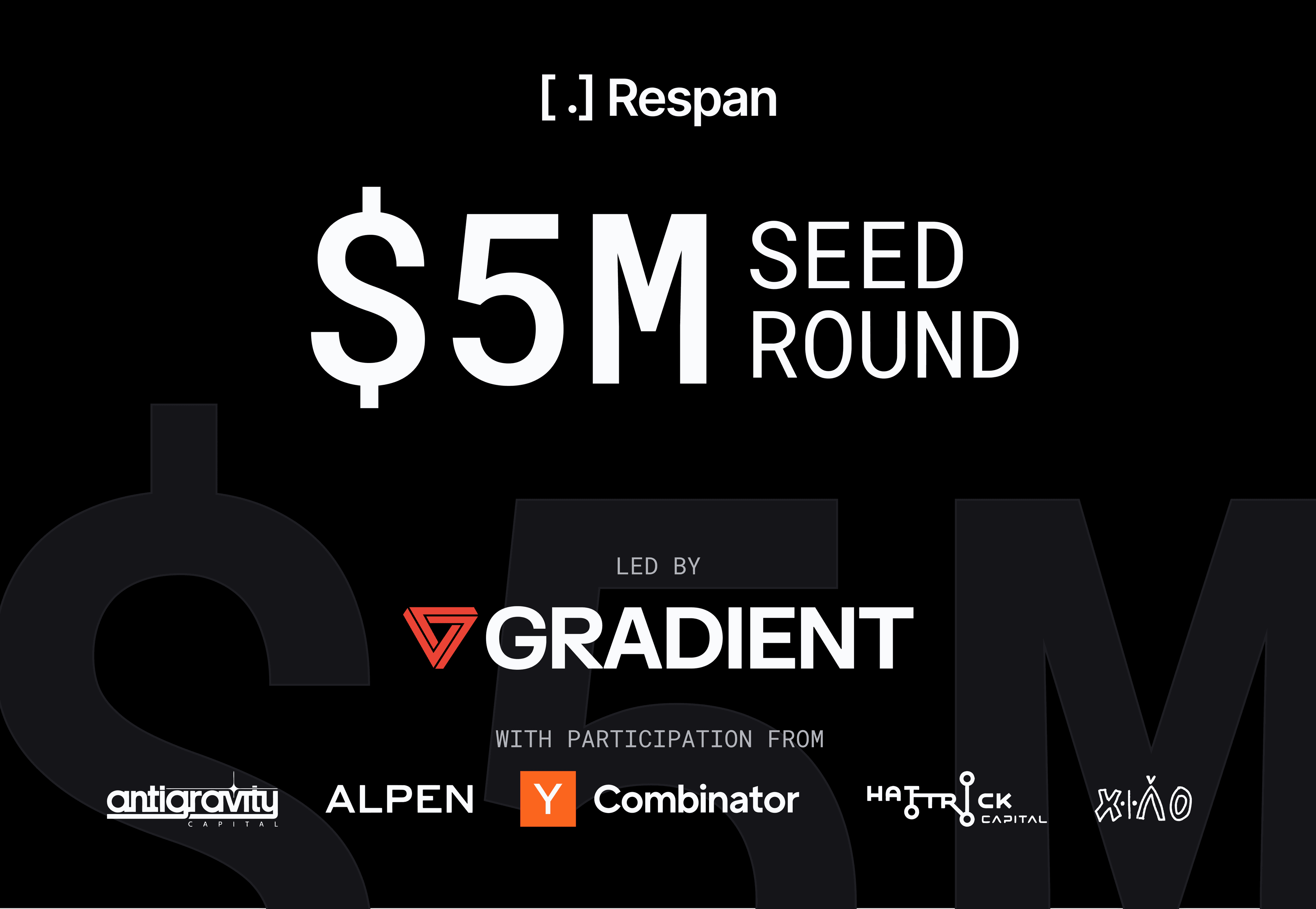

Why we changed our name

Today, Keywords AI is officially rebranded as Respan.

For a long time, there was a mismatch. We build high-throughput AI gateways, distributed tracing for observability, and enterprise-grade evaluations. But our name was Keywords AI. To new users, it sounded like a prompt engineering tool or an SEO plugin. It didn't reflect the product we were actually shipping.

So today, we're fixing it.

Why Respan? In LLM apps, the atomic unit is a Span. You trace request spans. You debug latency spans. You evaluate execution spans. We are the platform that gives you visibility into all of them.

Better name, better product.

The problem with today's stack

Many teams today use observability and evaluation tools like Langfuse or Braintrust. These tools are genuinely useful. They provide traces, metrics, and AI-based evals that help teams inspect failures and measure quality.

But structurally, they stop too early.

They answer retrospective questions (what happened, how did this version score) but leave the hardest questions unanswered once agents scale:

- Is this a real regression or just non-deterministic noise?

- Which decision in the workflow caused the failure?

- What should be evaluated next as the agent evolves?

- Will this change break something that used to work?

As a result, humans remain the control plane. Engineers stitch together traces, eval scores, and intuition, fix one issue, and hope they didn't introduce another. This reactive loop becomes untenable as agents grow more autonomous and customer impact increases.

The Respan insight

Agent quality requires a control plane.

To manage agents over time, teams need a system that tightly connects:

observability → evaluations → decisions → iteration

This system must understand full agent behavior, explicitly handle non-determinism, and evolve alongside the agent itself.

Respan is built to be that system.

What Respan is

The first proactive AI observability platform that closes the loop from evals to iteration. Respan automatically evaluates production behavior and turns results into concrete changes teams can ship.

Respan provides deep observability: full execution traces across messages, tool calls, routing decisions, memory, environment state, and outcomes. And it goes beyond visibility.

Respan runs proactive, workflow-level evaluations embedded directly into how agents are built, tested, and shipped. Evaluations trigger automatically when prompts, workflows, routing logic, models, or production behavior change.

Respan uses evals to drive decisions: what changed, what regressed, and what to fix next. That turns observability into control.

We redesigned AI evals from first principles

Most evaluation tools are organized around grader types: code-based, LLM-based, or human. That abstraction leaks complexity and encourages teams to optimize for the grader instead of the product.

Respan is metric-first.

Teams define a small set of metrics that actually matter: accuracy, reliability, cost, latency, safety, decision quality. A grader is a reviewer assigned to a metric. That reviewer can be code, an LLM judge, a heuristic, a human, or another agent.

Metrics remain stable. Review mechanisms evolve.

This keeps evals durable as agents, models, and workflows change.

Capability and reliability, together

Agents improve in two ways. Can the agent do this at all? And does it still work every time?

Capability evals help teams hill-climb new behaviors. Regression evals protect what already works. As agents improve, successful capability tests automatically graduate into regression suites.

Respan models non-determinism using multi-trial evaluation, so teams can distinguish real regressions from noise.

The evaluation agent

Respan is also building the first AI evaluation agent designed to evaluate other agents.

Because it has access to full traces, historical baselines, production distributions, and evaluation context, the evaluation agent can:

- Analyze failures across trials

- Localize root causes to specific decisions

- Recommend what evals to add next

- Decide when capability evals should become regressions

- Intelligently sample production traffic for review

Evaluation becomes a living system.

Why starting early matters

Teams often assume observability and evals are things to add later. In practice, they are easiest early, when success criteria are clear, failures are obvious, and small task sets are enough.

Waiting doesn't reduce work; it postpones it until regressions are harder to diagnose and user trust is already at risk. Respan is designed to start lightweight and compound as agents scale.

Build vs. buy

Strong teams often ask whether they should build this themselves.

Many do, at first.

In-house systems typically start with tracing, ad-hoc eval scripts, manually curated datasets, and humans reviewing transcripts. This works early. As agents scale, evals drift from production behavior, datasets go stale, non-determinism makes results noisy, and ownership becomes unclear.

The hardest part is deciding what to evaluate, when to run it, how to evolve it, and how to trust it over time.

Respan exists to solve that layer.

Who uses Respan

Respan is trusted by 100+ YC AI startups and enterprise teams shipping production AI.

Respan processes 1B+ logs and 2T+ tokens every month, supporting 6.5M+ end users.

![]() Retell AI

Retell AI

Retell AI scales voice agents to tens of millions of calls per month. They use Respan to trace multi-turn conversations end-to-end, catch regressions before they reach callers, and ship changes safely at high volume.

![]() Mem0

Mem0

Mem0 is the industry's leading memory framework for AI agents. They rely on Respan to evaluate memory reads and writes across workflows, ensuring agents recall the right context at the right time.

![]() AlphaSense

AlphaSense

AlphaSense is an enterprise research platform managing hundreds of prompts and workflows across their AI products. They use Respan for regression protection, prompt change tracking, and maintaining quality across their entire LLM stack.

The bottom line

AI agents need control.

Respan is the first platform that turns agent observability and evaluation into action.