TL;DR

AI observability is the operational discipline of running AI systems in production — every model call, every embedding, every agent step traced, evaluated, and attributed for cost. It's the broader category; LLM observability is the deepest specialization. Generic APM doesn't cover it because AI systems can return HTTP 200 and still be wrong. Every team shipping real AI products needs purpose-built tooling.

What is AI observability?

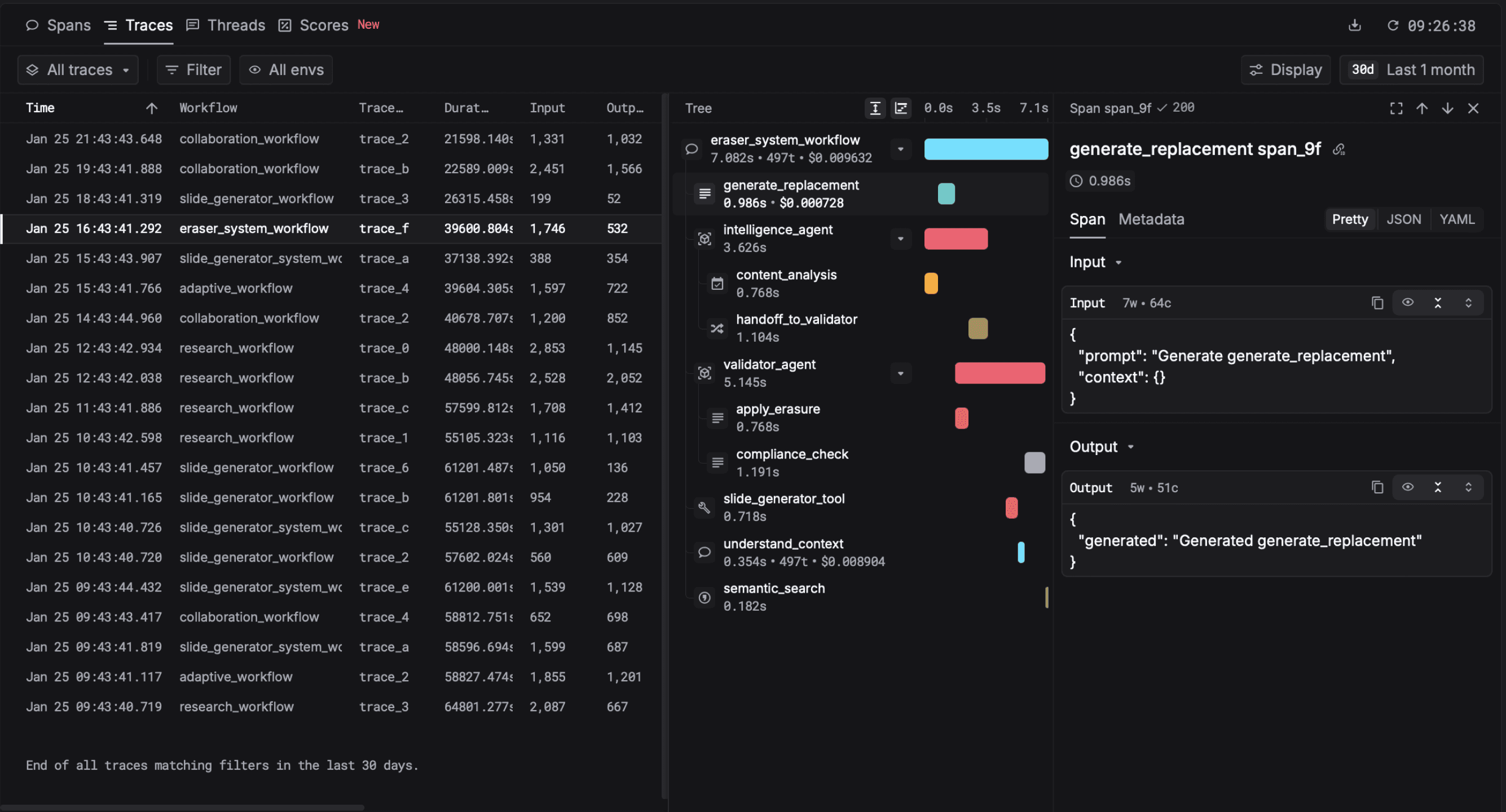

AI observability is the practice of capturing, tracing, evaluating, and monitoring every AI-powered operation in production. The "AI" qualifier means it spans the full stack of model types — large language models and agents, embedding models, classifiers, vision and audio pipelines, recommendation engines, and the increasingly common composite systems that chain several of these together.

What it actually does in practice: capture every model call as a trace, score outputs against quality criteria you define, attribute cost per feature and per user, alert on regressions, and keep a structured archive of production data that you can mine for evaluation and fine-tuning later.

AI observability vs LLM observability vs APM

AI observability is the umbrella; LLM observability is the deepest specialization most teams need. APM stays in the picture as a forwarding destination, not a replacement.

The three terms get confused. Here's how they actually relate:

- APM (Application Performance Monitoring) — Datadog, New Relic, Honeycomb, generic. Built for deterministic systems. Tracks HTTP-level latency and errors. Now ships AI bolt-ons that capture spans but miss the AI-specific structure.

- AI observability — purpose-built for any AI workload. Adds quality evaluation, prompt and model versioning, content-level tracing, and per-feature cost attribution. Includes LLM observability as a subset.

- LLM observability — the LLM-specific specialization. Same components as AI observability with deeper support for prompt management, LLM-as-judge evals, agent tracing, and gateway integration. Most teams shipping LLM products need this layer specifically. Read the LLM observability guide.

The right architecture for most teams: a dedicated AI observability platform as primary, with OTel forwarding to APM for cross-cutting infrastructure metrics. Best of both.

The single signal that decides whether a tool can do AI observability or just dress up as it: does it capture inputs and outputs as first-class data, or does it redact them? Traditional APM redacts payload bodies for privacy and cost. That makes APM useless for AI debugging — you can't reason about a hallucination without seeing the prompt and the completion side-by-side.

Most AI workloads in production aren't pure LLM either. The teams I see shipping in 2026 mix LLMs with embedding lookups, classifier scoring, and old-fashioned ML models — often in the same trace. The majority of teams we see running LLM features in production also run at least one non-LLM AI workload — embeddings, classifiers, recommendation models — alongside in the same trace. "AI observability" becomes the right framing the moment your trace has more than one model type in it.

Teams shipping AI in production with Respan

The five components

Every mature AI observability stack covers these five. Skipping one creates a known blind spot.

1. Tracing

End-to-end capture of every operation as a tree of spans. Built on OpenTelemetry's GenAI semantic conventions for portability. Multi-step agents are impossible to debug without it. Deep dive on LLM tracing.

2. Evaluation

Quality scoring against criteria you define. Three flavors — rule-based (fast, deterministic), LLM-as-judge (cheap, scales), human review (slow, ground truth). The teams that ship reliable AI run all three. Deep dive on evals.

3. Metrics

Aggregates over time and dimensions: TTFT, generation P95/P99, cost per user, eval score, retry rate. Sliceable by feature, model, prompt version, and user — not just time. The gap between P95 and P99 is usually where the customer experience problem hides.

4. Prompt and model versioning

Prompts and model configurations are code. They need diffs, rollback, and A/B testing. A change to a system prompt can degrade quality more than a code change.

5. Dataset curation

Production traces are gold. The most interesting failures and high-quality examples should flow into evaluation datasets and fine-tuning corpora. The platforms that close this loop turn observability into compounding advantage.

How to instrument

from respan import Respan

from openai import OpenAI

respan = Respan(api_key="...")

client = respan.wrap(OpenAI())

# Every AI call now traced, evaluated, and cost-attributed

client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": "..."}],

metadata={

"user_id": "u_123",

"feature": "support_agent",

"prompt_template_id": "support.v3",

},

)For non-LLM AI workloads (embedding pipelines, classifier inference, recommendation calls), wrap the call with respan.span(). Respan captures the inputs, outputs, latency, and cost as a generic AI span using OTel GenAI conventions where applicable.

Common AI observability mistakes

- Sampling production traces. The rare failures are the ones you most need. Capture 100% by default.

- Treating quality as one number. Decompose into 3-5 orthogonal eval criteria; chart each.

- Generic APM as the only signal. APM redacts payloads and has no quality model. Use a dedicated AI platform forwarding to APM.

- No alerts on cost or eval score regression. The most common production AI bugs are silent. Alert on them.

- Multi-step agents traced as single calls. Each tool call and agent step needs its own span.

Frequently asked questions

Head of DevRel at Respan (YC W24). Working alongside the team running the infrastructure that handles 80M+ LLM requests a day.

Connect on LinkedIn →Add observability to every AI call in two lines

Trace, evaluate, attribute, and alert across every AI workload — LLM and beyond. Free tier with generous limits.

Related guides: LLM observability · LLM tracing · LLM evals · LLM gateway