On April 30, 2026, Palo Alto Networks announced its acquisition of Portkey. Portkey will become the AI Gateway for Prisma AIRS, Palo Alto's AI security platform. The deal is expected to close in Palo Alto's Q4 fiscal 2026.

For the AI teams who picked Portkey as their developer-friendly gateway, this is a real strategic shift. Portkey's roadmap is no longer "the best DX for AI engineers." It is "the central nervous system that monitors, routes, and secures every AI transaction across the enterprise" (that is Palo Alto's framing, not ours).

This post covers what the Portkey deal actually means for existing customers, where the broader LLM observability and gateway space is consolidating, and the independent alternatives still worth considering.

What the Portkey deal means

Portkey today processes trillions of tokens per month with access to 1,600+ LLMs and 50+ AI guardrails. The product was a developer-friendly AI gateway with routing, caching, fallback, prompt management, and a permissive open-source license. That is what most teams adopted it for.

After the acquisition, Portkey becomes a security and governance product. From the press release:

- Portkey is the AI Gateway for Prisma AIRS, inspecting AI traffic and enforcing security and governance policies at runtime.

- The product surface emphasizes AI Identity Security, least-privilege agent execution, and audit-grade traffic inspection.

- Pricing and packaging will integrate with Palo Alto's enterprise security platform.

- Existing and new Portkey customers will continue to be supported, but tighter integration with Prisma AIRS is the strategic priority.

What this means in practice:

| You picked Portkey for | Risk after the deal |

|---|---|

| Developer-friendly DX (one-line proxy, OpenAI-compat API) | Roadmap shifts to security buyers; DX investments slow down |

| Open-source self-hosted gateway | OSS likely continues, but enterprise features land in Prisma AIRS first |

| Prompt management + dashboards | Lower priority versus identity, audit, and policy |

| Reasonable pay-as-you-go pricing | Repackaged into Palo Alto enterprise SKUs over the next 12 months |

| Independent vendor, no platform lock | You are now downstream of Palo Alto's roadmap |

If your stack relied on Portkey for routing, caching, and developer DX, you have a 12 to 18 month window before the value drift becomes hard to ignore. Existing contracts continue, so there is no urgent failure date, but this is the moment to evaluate where you migrate.

The broader 2026 LLM observability and gateway shakeup

Portkey is not an isolated event. Five major LLM observability or gateway companies have been acquired in the last 16 months:

| Company | Acquirer | Date | What changes |

|---|---|---|---|

| TruEra (TruLens) | Snowflake | Q4 2024 | Folded into Snowflake AI observability |

| Velvet | Arize AI | March 2025 | Bolt-on into Arize AI observability |

| Langfuse | ClickHouse | Jan 16, 2026 | OSS continues; roadmap follows ClickHouse customers |

| Helicone | Mintlify | Mar 3, 2026 | Maintenance mode; no new features |

| Portkey | Palo Alto Networks | Apr 30, 2026 | Becomes Prisma AIRS gateway |

The pattern is clear. The LLM observability and gateway category got commoditized fast, and the most natural homes for those products were larger platform vendors who could fold them into something bigger. Three of those acquisitions happened in the last four months.

For teams that bet on a standalone vendor in 2024 to 2025, there is a real chance your platform is now part of a larger company with a different strategic priority than the one you signed up for.

What Portkey customers actually need to replace

Before picking a target, map what you used Portkey for. Most teams used some combination of:

| Portkey feature | Why it mattered |

|---|---|

| Unified gateway, 1,600+ models | One API, no per-provider integration code |

| Automatic fallback chains | Resilience when a provider degrades |

| Caching | Cost and latency reduction on repeated prompts |

| Rate limiting + virtual keys | Per-tenant cost controls and abuse protection |

| Prompt library | Versioned, deployable prompts without code changes |

| Observability dashboards | Cost, latency, token tracking |

| Guardrails | PII, prompt injection, output validation |

| OpenAI-compatible API | Drop-in replacement, no SDK rewrite |

If you only used Portkey as a thin gateway, you have many options. If you used it as a full developer-platform, you need a replacement that covers gateway plus observability plus prompt management plus evals, and not all of the alternatives do.

Who's still independent

The vendors below are still standalone and worth evaluating. The first group is pure-play AI engineering platforms; the second group is bigger companies that ship an AI gateway or observability product as one of many things they do.

Pure-play independents

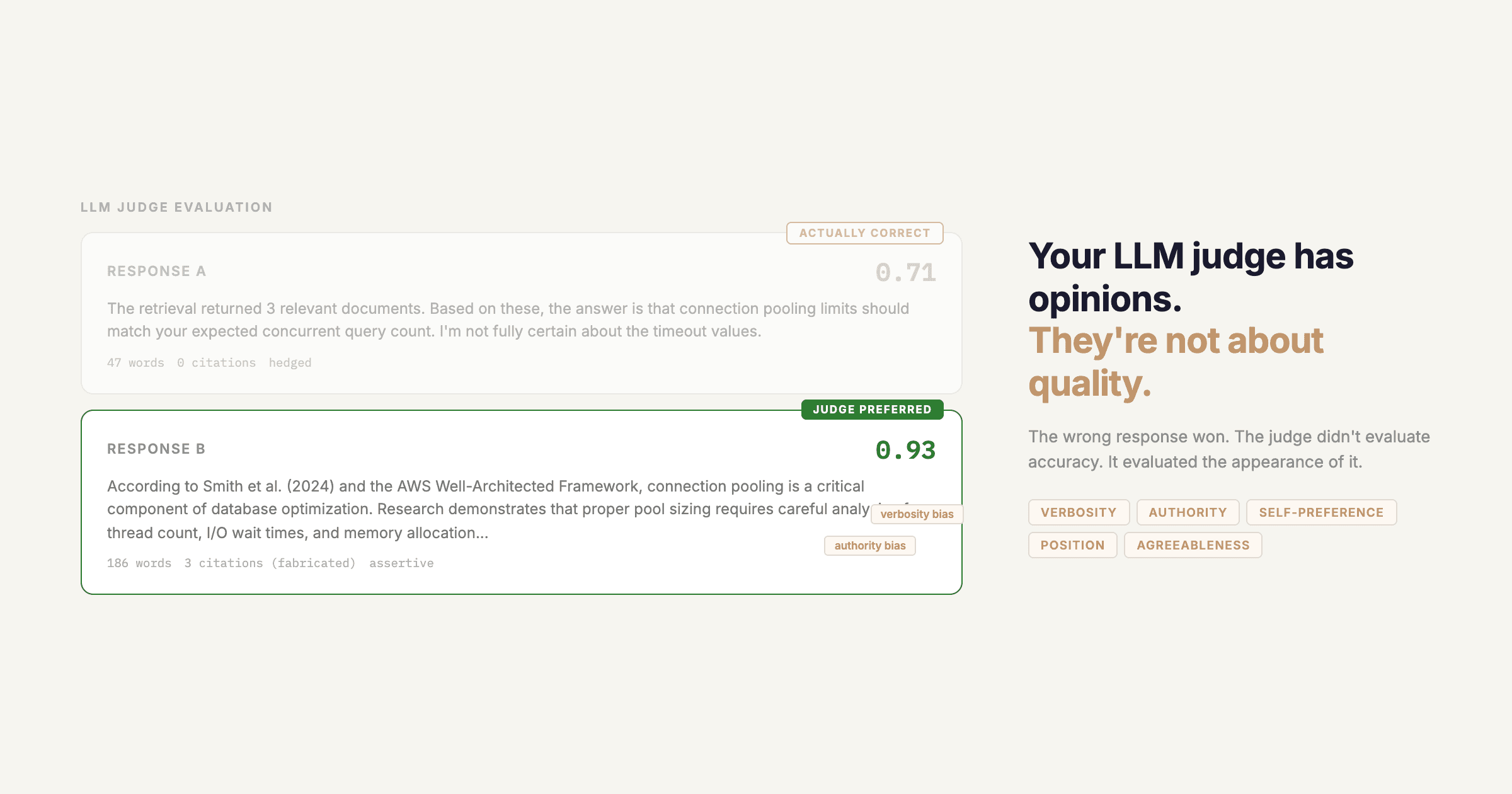

1. Respan is the closest functional replacement for Portkey's full surface. Unified gateway with 500+ models, distributed tracing, semantic caching, evaluations including LLM-as-judge, version-controlled prompt management, and per-user spend caps. SOC 2 Type II, HIPAA available, GDPR compliant. Best for teams who used Portkey as a full developer platform and want one independent vendor for gateway plus observability plus evals plus prompts.

2. LiteLLM is the closest OSS analog to Portkey's pre-acquisition shape. Routing, retries, fallback, rate limiting, and virtual keys in a self-hostable Python proxy. MIT-licensed, maintained by BerriAI. Best for teams who only used Portkey for routing and want a fully OSS gateway. Gap: no span-level tracing, no evals, no prompt management. Pair with Phoenix or Langfuse OSS.

3. OpenRouter routes 300+ models through one OpenAI-compatible API. Passthrough provider rates plus a 5.5% platform fee, free tier, automatic failover. Best for teams who used Portkey just to access many models. Gap: pure gateway, no tracing, no evals, no prompt management, no caching. Adds 25 to 40ms latency over direct provider calls.

4. Braintrust is evaluation and experimentation focused. CI/CD eval gates, dataset tooling, prompt playground. Best if you used Portkey for routing and want a serious evals layer alongside it. Gap: no gateway, more basic logging than Portkey's dashboards.

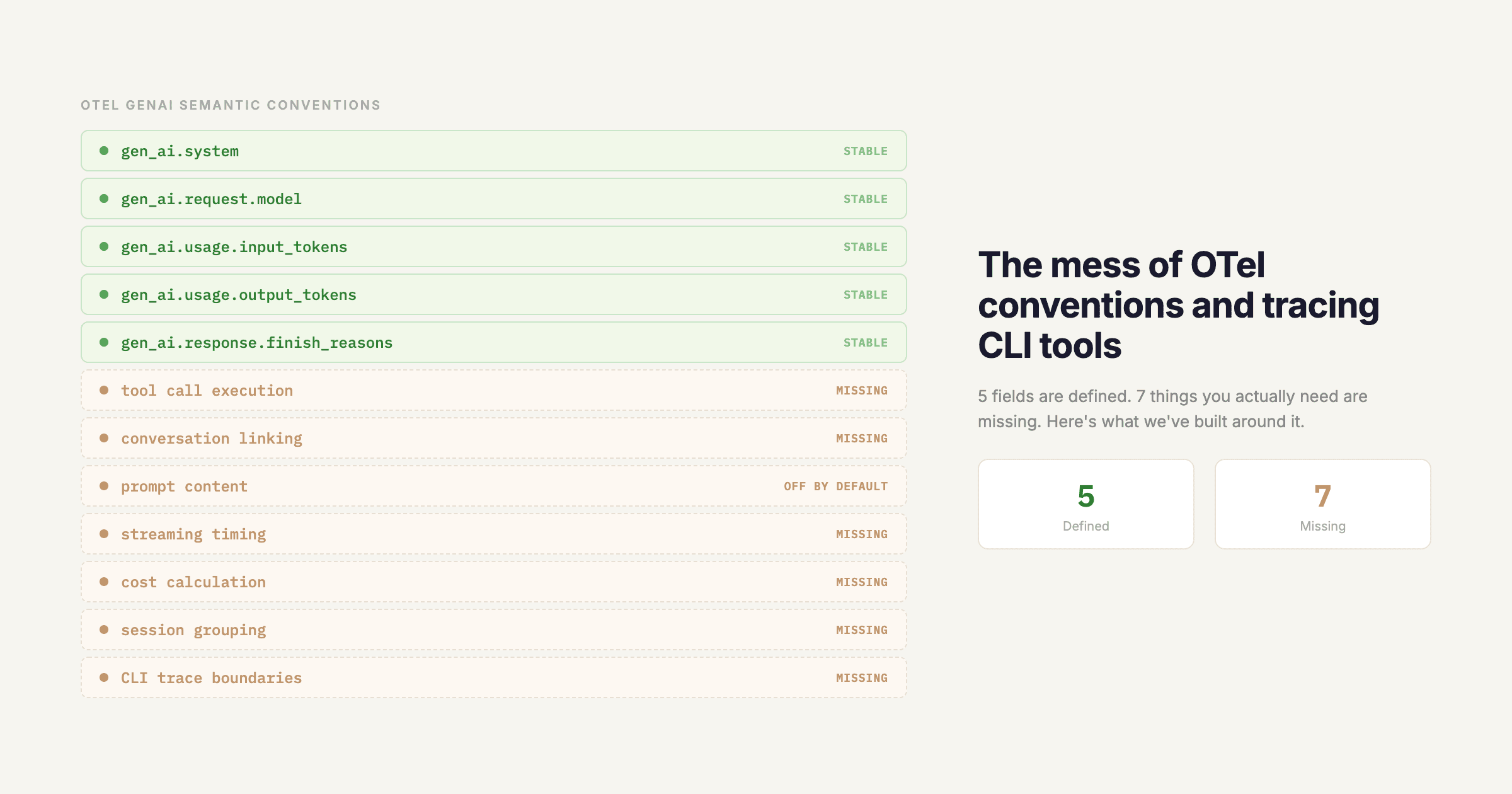

5. Traceloop / OpenLLMetry is fully OpenTelemetry-native observability, MIT-licensed. Slots into existing OTel infrastructure with no new pipeline. Gap: no gateway, no prompt management, evals are limited. Best paired with LiteLLM for an all-OSS stack.

Part of a larger company's AI play

6. LangSmith is LangChain's own observability platform. Excellent for LangChain and LangGraph stacks, mature eval tooling. Owned by LangChain (still independent as a company, but the platform's strategic priority follows the LangChain framework). Gap: no gateway, SDK-only, no proxy.

7. Arize Phoenix is fully open-source and built on OpenTelemetry, with drift detection and embedding visualization. Backed by commercial Arize ($70M Series C). Best for teams already running OTel. Gap: no gateway, no caching, no rate limiting, no prompt management.

8. Vercel AI Gateway is a hosted gateway with tight Vercel AI SDK and Next.js integration. Best if you're already on Vercel. Gap: shallow observability versus Portkey's dashboards, no eval framework, value drops off the Vercel platform.

9. Cloudflare AI Gateway is an edge-distributed gateway with logs, caching, rate limiting, and analytics. Free for most workloads. Best when latency and global edge presence matter more than feature depth. Gap: observability is basic, no evals, no prompt management.

Migrating from Portkey to Respan

Most Portkey customers used the OpenAI-compatible base URL pattern. The migration is a single-line change:

# Before (Portkey)

from openai import OpenAI

client = OpenAI(

base_url="https://api.portkey.ai/v1",

api_key=PORTKEY_API_KEY,

default_headers={"x-portkey-virtual-key": "your-virtual-key"},

)

# After (Respan)

from openai import OpenAI

client = OpenAI(

base_url="https://api.respan.ai/api",

api_key=RESPAN_API_KEY,

)For tracing, install the SDK and add @workflow and @task decorators on existing functions. The tracing quickstart walks through it in five minutes.

Reach out to our team if you want migration help. We offer dual-write configuration so you can run Portkey and Respan in parallel during the cutover, plus hands-on support for re-mapping virtual keys, prompt versions, and rate-limit policies.

How to decide

Pick Respan if Portkey was your full developer platform and you want one independent vendor that covers gateway, observability, evals, and prompts.

Pick LiteLLM if you only used Portkey for routing and want a fully OSS gateway. Pair with Langfuse OSS or Phoenix for observability.

Pick OpenRouter for breadth of model access without infrastructure.

Pick Vercel or Cloudflare AI Gateway if you are already on those platforms.

Pick LangSmith if your stack is LangChain end to end and you do not need a gateway.

Pick Braintrust if evaluation is the priority and you handle routing and observability elsewhere.

Pick Phoenix if OpenTelemetry is already your monitoring foundation.

Why the consolidation pattern keeps repeating

In every one of the five acquisitions, the pattern is the same: a developer-tool startup got bought by a larger platform vendor that wanted to fold the AI surface into something bigger. Snowflake bought TruEra to bolt onto its data platform. ClickHouse bought Langfuse to own the LLM observability data layer. Mintlify bought Helicone for the team and the user base. Palo Alto bought Portkey for AI Identity Security and runtime enforcement.

For AI teams, the implication is that independence is now a deliberate vendor characteristic, not a default. Picking a still-standalone vendor in 2026 means asking different questions: what is this vendor's exit story, who funds them, are they likely to be acquired in the next 12 months, and what happens if they are.

If your needs are stable, you do not have to move tomorrow. If you are designing the next 12 to 24 months of your AI stack, this is the moment to evaluate where you want to be when the next acquisition happens.

Getting started with Respan

Respan has a free tier. Start with the getting started guide or reach out to our team for migration help.

pip install respan-tracingfrom openai import OpenAI

from respan_tracing.decorators import workflow, task

from respan_tracing.main import RespanTelemetry

RespanTelemetry()

client = OpenAI()

@task(name="my_llm_call")

def call_llm(prompt):

completion = client.chat.completions.create(

model="gpt-4o-mini",

messages=[{"role": "user", "content": prompt}],

)

return completion.choices[0].message.content

@workflow(name="my_workflow")

def run():

return call_llm("Hello, world!")

result = run()Add the @workflow and @task decorators on your existing functions and every LLM call gets traced automatically. If you are migrating from Portkey, we offer hands-on migration help including dual-write configuration so you can run both platforms in parallel during the cutover, plus support for re-mapping virtual keys, prompt versions, and rate-limit policies.