Building an AI agent is easy; knowing why it's failing is the hard part. As you move from simple chat completions to complex agentic loops with Haystack, the Vercel AI SDK, or LiteLLM, you quickly realize that traditional logging isn't enough. You need traces.

In this comprehensive guide, we dive deep into the industry standard: OpenTelemetry (OTel). We'll explore the technical "How-To" for the most popular LLM stacks and compare manual setup vs. the streamlined Keywords AI approach. By the end, you'll understand not just how to instrument your LLM applications, but why OpenTelemetry has become the de facto standard for production AI observability.

Deep Dive: What is OpenTelemetry (OTel)?

OpenTelemetry is not a backend; it is a vendor-neutral, open source observability framework. It provides a standardized set of APIs, SDKs, and protocols (OTLP) to collect "telemetry"—Traces, Metrics, and Logs. Think of it as the "USB-C of observability": a universal standard that works with any tool.

As an open source project under the Cloud Native Computing Foundation (CNCF), OpenTelemetry provides LLM monitoring open source solutions that don't lock you into proprietary platforms. This makes it the ideal foundation for building comprehensive LLM observability into your applications.

The Three Pillars of Observability

OpenTelemetry is built around three core data types:

1. Traces A trace represents the entire lifecycle of a request as it flows through your system. In LLM applications, a trace might capture:

- The initial user query

- RAG retrieval operations

- Multiple LLM calls (if using agentic loops)

- Post-processing steps

- Final response delivery

2. Metrics Quantitative measurements over time. For LLMs, critical metrics include:

- Token usage per model

- Cost per request

- Latency percentiles (p50, p95, p99)

- Error rates

- Throughput (requests per second)

3. Logs Structured events with timestamps. While logs are less emphasized in OTel (compared to traces and metrics), they're still valuable for capturing:

- Error messages

- Debug information

- Audit trails

How OpenTelemetry Works: The Architecture

In the context of LLMs, OTel works by creating a Trace, which represents the entire lifecycle of a user request. Inside that trace are Spans.

- Trace: The "Macro" view (e.g., a user asks for a summary of a PDF, which triggers retrieval, embedding, and generation).

- Span: The "Micro" view (e.g., the embedding call, the vector search, the LLM completion, the post-processing step).

Each span contains:

- Name: What operation was performed (e.g., "llm.completion")

- Attributes: Key-value pairs (e.g.,

llm.model="gpt-4",llm.tokens.prompt=150) - Events: Timestamped annotations (e.g., "retrieval.started", "model.selected")

- Status: Success, error, or unset

- Duration: How long the operation took

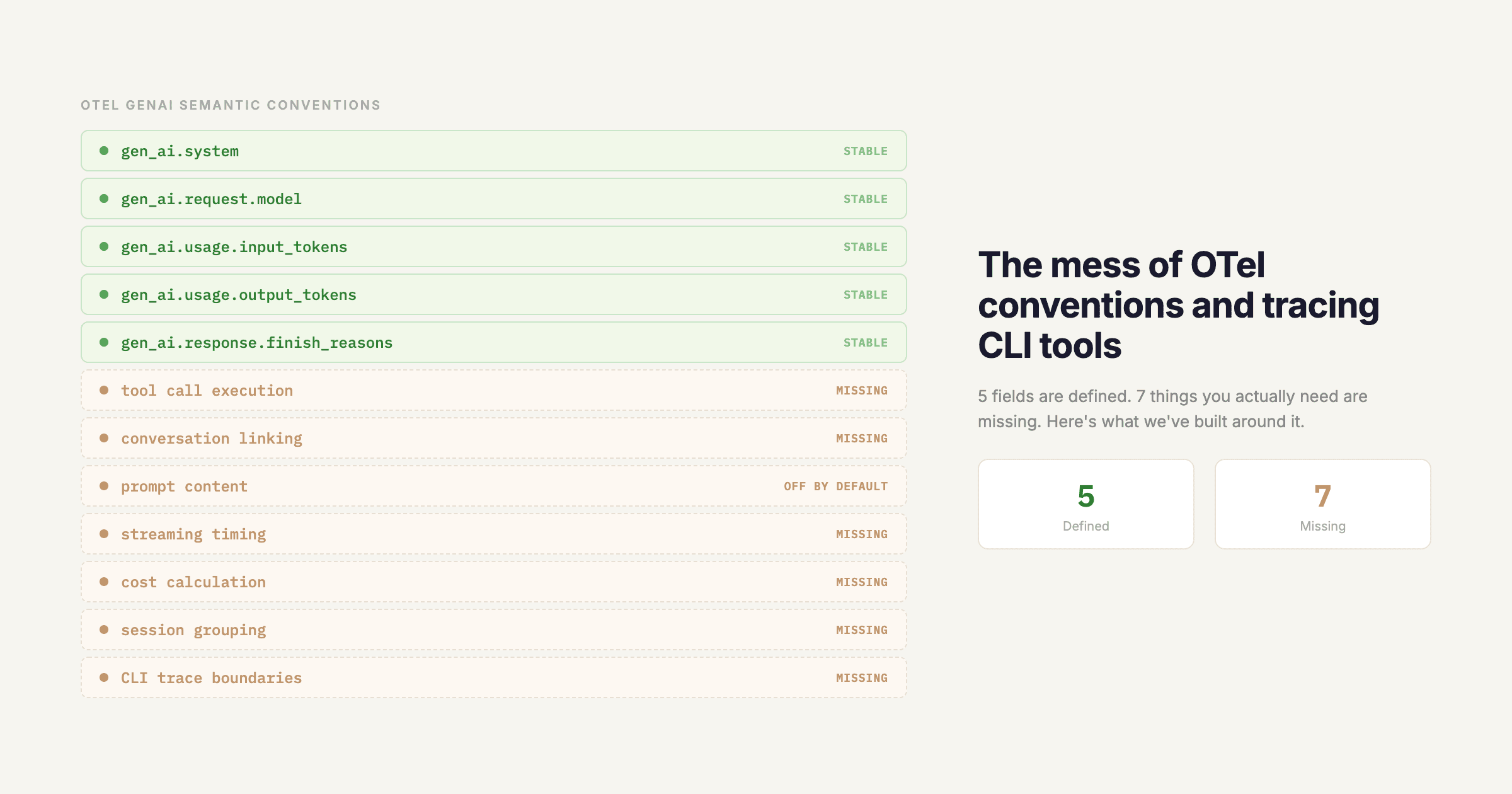

The OpenTelemetry Specification

OpenTelemetry follows a strict specification that ensures interoperability. The spec defines:

- Semantic Conventions: Standard attribute names (e.g.,

http.method,db.query,llm.model) - OTLP Protocol: The wire format for sending telemetry data

- API Contracts: How SDKs must behave across languages

This standardization means you can instrument your Python Haystack pipeline, your TypeScript Vercel AI SDK app, and your LiteLLM proxy, and they'll all produce compatible traces that can be viewed in a single dashboard.

OpenTelemetry vs. Proprietary Solutions

Unlike vendor-specific solutions (Datadog APM, New Relic, etc.), OpenTelemetry gives you:

- Vendor Freedom: Instrument once, export anywhere

- Community Standards: Built by the CNCF with industry-wide adoption

- Future-Proofing: Your instrumentation code doesn't break when you switch backends

- Cost Efficiency: Avoid vendor lock-in and choose the most cost-effective backend

The Technical Stack: Understanding OTel Components

To get OpenTelemetry running in production, your system needs four components working together:

Component 1: Instrumentation

The code inside your application that generates spans. This can be:

- Automatic: Using auto-instrumentation libraries that hook into frameworks

- Manual: Writing custom spans using the OTel API

- Hybrid: Combining both approaches

For LLM applications, you typically need manual instrumentation because:

- LLM frameworks don't always have built-in OTel support

- You need to capture LLM-specific attributes (tokens, costs, model IDs)

- You want fine-grained control over what gets traced

Component 2: SDK (Software Development Kit)

The language-specific implementation of the OpenTelemetry API. Popular SDKs include:

@opentelemetry/sdk-node(Node.js/TypeScript)opentelemetry-sdk(Python)opentelemetry-js(Browser/Edge)

The SDK handles:

- Span creation and management

- Context propagation (passing trace context across async boundaries)

- Resource detection (identifying your service, host, etc.)

Component 3: Exporter

The component that sends telemetry data to your backend. Common exporters:

- OTLP Exporter: Sends data via the OpenTelemetry Protocol (recommended)

- Jaeger Exporter: Direct export to Jaeger

- Zipkin Exporter: Direct export to Zipkin

- Console Exporter: For debugging (prints to stdout)

For LLM observability, you'll typically use the OTLP HTTP exporter to send data to an LLM observability platform like Keywords AI, which provides LLM-specific dashboards and analytics. When choosing the best LLM observability platform for your needs, consider factors like cost tracking, token usage analytics, and integration with your existing stack.

Component 4: Backend/Collector

Where your telemetry data lives and gets visualized. You have two options:

Option A: Direct Export Your application exports directly to a backend (e.g., Keywords AI, Datadog, Grafana Cloud).

Option B: OpenTelemetry Collector A middleman service that receives, processes, and routes telemetry data. The collector is useful for:

- Batch processing (reducing API calls)

- Data transformation (enriching spans with metadata)

- Multi-backend routing (sending to multiple destinations)

- Sampling (reducing data volume)

For most LLM applications, direct export is simpler and sufficient. The collector adds operational complexity that may not be necessary unless you're running at massive scale.

Why OTel is Critical for LLM Observability

LLM applications are fundamentally different from traditional web applications. They're non-deterministic, stateful, and involve complex multi-step workflows. When an agent fails, it could be because of:

- A prompt injection attack

- A retrieval error (wrong documents returned)

- A model timeout

- A rate limit hit

- A cost budget exceeded

- A hallucination that went undetected

OpenTelemetry's Distributed Tracing allows you to follow the request as it travels across different microservices, LLM providers, and infrastructure components. This is critical for debugging production issues.

The LLM Observability Challenge

Traditional application monitoring focuses on:

- CPU usage

- Memory consumption

- Request latency

- Error rates

LLM observability requires tracking:

- Token usage and costs across different models (GPT-4 vs. Claude vs. Gemini)

- Prompt and response quality (hallucinations, relevance, accuracy)

- Model performance (latency, throughput, error rates per model)

- User interactions and conversation flows (multi-turn conversations)

- RAG pipeline performance (retrieval accuracy, context relevance, embedding quality)

- Chain execution (multi-step workflows, tool calls, function calling)

- Cost optimization (identifying which models are most cost-effective for specific tasks)

Why Standard Logging Falls Short

Consider this scenario: A user reports that your AI agent gave a wrong answer. With standard logging, you might see:

[INFO] User query: "What is the capital of France?"

[INFO] LLM response: "Paris"

But you don't know:

- Which model was used?

- How many tokens were consumed?

- What was the latency?

- What documents were retrieved (if using RAG)?

- What was the cost?

- Was there an error that was silently handled?

With OpenTelemetry traces, you get a complete picture:

Trace: user_query_abc123

├─ Span: rag.retrieval

│ ├─ Attributes: retrieval.doc_count=5, retrieval.latency_ms=120

│ └─ Events: retrieval.started, retrieval.completed

├─ Span: llm.completion

│ ├─ Attributes: llm.model=gpt-4, llm.tokens.prompt=250, llm.tokens.completion=50

│ ├─ Attributes: llm.cost=0.003, llm.latency_ms=850

│ └─ Status: OK

└─ Span: post_processing

└─ Attributes: processing.type=sentiment_analysis

The Business Case for LLM Observability

Beyond debugging, observability drives business outcomes:

- Cost Optimization: Identify which models are most cost-effective for specific use cases

- Quality Assurance: Track hallucination rates and response quality over time

- User Experience: Understand latency patterns and optimize for user satisfaction

- Compliance: Audit trails for regulated industries (healthcare, finance)

- Capacity Planning: Understand usage patterns to scale infrastructure appropriately

When evaluating LLM observability platforms, look for solutions that provide comprehensive LLM monitoring open source capabilities through OpenTelemetry integration. The best LLM observability platform will offer automatic cost tracking, token usage analytics, and seamless integration with frameworks like Vercel AI SDK, Haystack, and LiteLLM.

Setup: Vercel AI SDK Telemetry + OpenTelemetry

The Vercel AI SDK is the go-to choice for Next.js developers building AI applications. It provides a unified interface for working with multiple LLM providers (OpenAI, Anthropic, Google, etc.) and handles streaming, tool calling, and structured outputs.

However, managing Vercel AI SDK telemetry with OpenTelemetry in a serverless environment (Vercel Functions) comes with significant overhead. Setting up proper observability for Vercel AI SDK requires careful configuration of OpenTelemetry instrumentation. Let's explore both approaches.

Option A: Manual OTel Setup (The Hard Way)

To manually instrument Vercel AI SDK, you must use the @opentelemetry/sdk-node package and configure an instrumentation.ts file. Here's what's involved:

Step 1: Install Dependencies

npm install @opentelemetry/api @opentelemetry/sdk-node @opentelemetry/exporter-trace-otlp-http @opentelemetry/instrumentation @opentelemetry/resources @opentelemetry/semantic-conventionsStep 2: Create instrumentation.ts

Vercel requires an instrumentation.ts file in your project root to initialize OpenTelemetry before your application code runs:

// instrumentation.ts

import { NodeSDK } from '@opentelemetry/sdk-node';

import { OTLPTraceExporter } from '@opentelemetry/exporter-trace-otlp-http';

import { Resource } from '@opentelemetry/resources';

import { SemanticResourceAttributes } from '@opentelemetry/semantic-conventions';

import { HttpInstrumentation } from '@opentelemetry/instrumentation-http';

import { ExpressInstrumentation } from '@opentelemetry/instrumentation-express';

const sdk = new NodeSDK({

resource: new Resource({

[SemanticResourceAttributes.SERVICE_NAME]: 'vercel-ai-app',

[SemanticResourceAttributes.SERVICE_VERSION]: process.env.VERCEL_GIT_COMMIT_SHA || '1.0.0',

[SemanticResourceAttributes.DEPLOYMENT_ENVIRONMENT]: process.env.VERCEL_ENV || 'development',

}),

traceExporter: new OTLPTraceExporter({

url: process.env.OTEL_EXPORTER_OTLP_ENDPOINT || 'https://api.keywordsai.co/v1/traces',

headers: {

'Authorization': `Bearer ${process.env.KEYWORDSAI_API_KEY}`,

'Content-Type': 'application/json',

},

}),

instrumentations: [

new HttpInstrumentation(),

new ExpressInstrumentation(),

],

});

sdk.start();

// Ensure spans are flushed before the process exits

process.on('SIGTERM', () => {

sdk.shutdown()

.then(() => console.log('OpenTelemetry terminated'))

.catch((error) => console.log('Error terminating OpenTelemetry', error))

.finally(() => process.exit(0));

});Step 3: Configure next.config.js

You need to enable the experimental instrumentationHook:

// next.config.js

module.exports = {

experimental: {

instrumentationHook: true,

},

};Step 4: Instrument Your API Routes

Now you need to manually wrap every AI SDK call with spans:

// app/api/chat/route.ts

import { openai } from '@ai-sdk/openai';

import { generateText, streamText } from 'ai';

import { trace, context } from '@opentelemetry/api';

import { NextRequest, NextResponse } from 'next/server';

const tracer = trace.getTracer('vercel-ai-sdk');

export async function POST(request: NextRequest) {

const { messages, stream } = await request.json();

// Start a trace for this request

const span = tracer.startSpan('ai.chat', {

attributes: {

'llm.framework': 'vercel-ai-sdk',

'llm.provider': 'openai',

'http.method': 'POST',

'http.route': '/api/chat',

},

});

try {

const activeContext = trace.setSpan(context.active(), span);

return await context.with(activeContext, async () => {

if (stream) {

return handleStreaming(messages, span);

} else {

return handleNonStreaming(messages, span);

}

});

} catch (error) {

span.recordException(error as Error);

span.setStatus({ code: 1, message: (error as Error).message });

throw error;

} finally {

span.end();

}

}

async function handleNonStreaming(messages: any[], span: any) {

const generateSpan = tracer.startSpan('ai.generate', {

parent: span,

});

try {

generateSpan.setAttributes({

'llm.messages.count': messages.length,

'llm.messages.last': JSON.stringify(messages[messages.length - 1]),

});

const { text, usage, finishReason } = await generateText({

model: openai('gpt-4'),

messages: messages,

});

// Extract and set LLM-specific attributes

generateSpan.setAttributes({

'llm.model': 'gpt-4',

'llm.response': text,

'llm.tokens.prompt': usage.promptTokens,

'llm.tokens.completion': usage.completionTokens,

'llm.tokens.total': usage.totalTokens,

'llm.finish_reason': finishReason,

'llm.cost': calculateCost(usage.promptTokens, usage.completionTokens, 'gpt-4'),

});

generateSpan.setStatus({ code: 0 }); // OK

return NextResponse.json({ text });

} catch (error) {

generateSpan.recordException(error as Error);

generateSpan.setStatus({ code: 1, message: (error as Error).message });

throw error;

} finally {

generateSpan.end();

}

}

async function handleStreaming(messages: any[], span: any) {

const streamSpan = tracer.startSpan('ai.stream', {

parent: span,

});

try {

streamSpan.setAttribute('llm.streaming', true);

const result = await streamText({

model: openai('gpt-4'),

messages: messages,

});

streamSpan.setAttribute('llm.model', 'gpt-4');

return result.toDataStreamResponse();

} catch (error) {

streamSpan.recordException(error as Error);

streamSpan.setStatus({ code: 1, message: (error as Error).message });

throw error;

} finally {

streamSpan.end();

}

}

function calculateCost(

promptTokens: number,

completionTokens: number,

model: string

): number {

const pricing: Record<string, { prompt: number; completion: number }> = {

'gpt-4': { prompt: 0.03 / 1000, completion: 0.06 / 1000 },

'gpt-4-turbo': { prompt: 0.01 / 1000, completion: 0.03 / 1000 },

'gpt-3.5-turbo': { prompt: 0.0015 / 1000, completion: 0.002 / 1000 },

};

const modelPricing = pricing[model] || pricing['gpt-3.5-turbo'];

return (promptTokens * modelPricing.prompt) +

(completionTokens * modelPricing.completion);

}Step 5: Handle Edge Runtime Issues

Vercel's Edge Runtime doesn't support Node.js APIs, which means OpenTelemetry SDKs that rely on Node.js won't work. You have two options:

- Switch to Node.js Runtime: Add

export const runtime = 'nodejs'to your route - Use Edge-Compatible Alternatives: Use Web APIs and manual instrumentation (more complex)

The Challenges with Manual Setup

- Runtime Compatibility: Edge vs. Node.js runtime conflicts

- Span Lifecycle Management: Ensuring spans are flushed before serverless functions terminate

- Cost Calculation: You must manually implement pricing logic for every model

- Context Propagation: Handling async boundaries and context loss

- Maintenance Burden: Every SDK update might break your instrumentation

Read the full manual guide: Vercel OTel Docs

Option B: The Keywords AI Way (Recommended)

Keywords AI replaces dozens of lines of configuration with a single package. We handle the runtime compatibility, the mapping of LLM-specific metadata (tokens, costs, model IDs), and the span lifecycle automatically.

Full setup guide: Vercel AI SDK + Keywords AI tracing

Step 1: Install Keywords AI Tracing

npm install @keywordsai/tracing-nodeStep 2: Initialize in instrumentation.ts

// instrumentation.ts

import { KeywordsTracer } from '@keywordsai/tracing-node';

KeywordsTracer.init({

apiKey: process.env.KEYWORDSAI_API_KEY,

serviceName: 'vercel-ai-app',

});That's it. No manual SDK configuration, no exporter setup, no span lifecycle management.

Step 3: Use in Your API Routes

// app/api/chat/route.ts

import { openai } from '@ai-sdk/openai';

import { generateText, streamText } from 'ai';

import { NextRequest, NextResponse } from 'next/server';

export async function POST(request: NextRequest) {

const { messages, stream } = await request.json();

if (stream) {

const result = await streamText({

model: openai('gpt-4'),

messages: messages,

});

return result.toDataStreamResponse();

} else {

const { text } = await generateText({

model: openai('gpt-4'),

messages: messages,

});

return NextResponse.json({ text });

}

}That's it. Keywords AI automatically:

- Detects Vercel AI SDK calls

- Creates spans with proper hierarchy

- Extracts token usage, costs, and model information

- Handles streaming vs. non-streaming

- Works in both Edge and Node.js runtimes

- Flushes spans before function termination

The Benefits

- 2 Minutes Setup: vs. 2-4 hours for manual setup

- Zero Maintenance: Updates automatically with SDK changes

- Automatic Cost Tracking: No manual pricing calculations

- Built-in Dashboard: No need for separate visualization tools

- Edge Runtime Support: Works out of the box

Setup: Haystack + OpenTelemetry

Haystack by deepset is a powerhouse for Python-based RAG pipelines. It's designed for production-ready LLM applications with built-in support for document stores, retrievers, generators, and complex pipelines.

Haystack has built-in support for OpenTelemetry, but the "wiring" is left to you. Let's compare the manual approach vs. the Keywords AI way.

Option A: Manual OTel Setup (The Hard Way)

For Haystack, you need to set up a Python tracer provider and link it to Haystack's internal tracing backend. Here's the complete setup:

Step 1: Install Dependencies

pip install haystack-ai opentelemetry-api opentelemetry-sdk opentelemetry-exporter-otlp-proto-http opentelemetry-instrumentationStep 2: Initialize OpenTelemetry Provider

# tracing_setup.py

from opentelemetry import trace

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

from opentelemetry.sdk.resources import Resource

from opentelemetry.semantic_conventions.resource import ResourceAttributes

import os

# Create resource with service information

resource = Resource.create({

ResourceAttributes.SERVICE_NAME: "haystack-rag-app",

ResourceAttributes.SERVICE_VERSION: os.getenv("APP_VERSION", "1.0.0"),

ResourceAttributes.DEPLOYMENT_ENVIRONMENT: os.getenv("ENVIRONMENT", "production"),

})

# Initialize tracer provider

provider = TracerProvider(resource=resource)

trace.set_tracer_provider(provider)

# Create OTLP exporter

exporter = OTLPSpanExporter(

endpoint="https://api.keywordsai.co/v1/traces",

headers={

"Authorization": f"Bearer {os.getenv('KEYWORDSAI_API_KEY')}",

"Content-Type": "application/json",

},

)

# Add batch processor

processor = BatchSpanProcessor(exporter)

provider.add_span_processor(processor)

# Get tracer

tracer = trace.get_tracer(__name__)Step 3: Configure Haystack to Use OpenTelemetry

Haystack has a tracing abstraction that you need to connect to OpenTelemetry:

# haystack_tracing.py

from haystack.tracing import OpenTelemetryTracer

from opentelemetry import trace

from opentelemetry.trace import Status, StatusCode

class CustomOpenTelemetryTracer(OpenTelemetryTracer):

"""Custom tracer that adds LLM-specific attributes"""

def __init__(self):

super().__init__(trace.get_tracer("haystack"))

def trace(self, operation_name: str, tags: dict = None, **kwargs):

"""Override to add custom attributes"""

span = self.tracer.start_span(operation_name)

if tags:

for key, value in tags.items():

# Map Haystack tags to OTel attributes

if key == "model":

span.set_attribute("llm.model", value)

elif key == "provider":

span.set_attribute("llm.provider", value)

elif key == "prompt_tokens":

span.set_attribute("llm.tokens.prompt", value)

elif key == "completion_tokens":

span.set_attribute("llm.tokens.completion", value)

else:

span.set_attribute(f"haystack.{key}", str(value))

return span

# Initialize Haystack tracing

from haystack import tracing

haystack_tracer = CustomOpenTelemetryTracer()

tracing.set_backend(haystack_tracer)Step 4: Instrument Your Haystack Pipeline

Now you need to manually instrument every component in your pipeline:

# pipeline.py

from haystack import Pipeline, Document

from haystack.components.builders import PromptBuilder

from haystack.components.retrievers import InMemoryBM25Retriever

from haystack.components.generators import OpenAIGenerator

from haystack.document_stores import InMemoryDocumentStore

from opentelemetry import trace

import os

# Import tracing setup

from tracing_setup import tracer

def create_rag_pipeline():

"""Create a RAG pipeline with manual OpenTelemetry instrumentation"""

# Document store

document_store = InMemoryDocumentStore()

# Retriever

retriever = InMemoryBM25Retriever(

document_store=document_store, top_k=5

)

# Prompt builder

prompt_template = """

Given the following information, answer the question.

Context:

{% for document in documents %}

{{ document.content }}

{% endfor %}

Question: {{ query }}

Answer:

"""

prompt_builder = PromptBuilder(template=prompt_template)

# LLM generator

generator = OpenAIGenerator(

api_key=os.getenv("OPENAI_API_KEY")

)

# Create pipeline

pipeline = Pipeline()

pipeline.add_component("retriever", retriever)

pipeline.add_component("prompt_builder", prompt_builder)

pipeline.add_component("llm", generator)

pipeline.connect("retriever", "prompt_builder.documents")

pipeline.connect("prompt_builder", "llm.prompt")

return pipeline

def run_rag_query(query: str, pipeline: Pipeline):

"""Run a RAG query with full OpenTelemetry tracing"""

# Start root span

with tracer.start_as_current_span("haystack.rag_pipeline") as root_span:

root_span.set_attribute("query", query)

root_span.set_attribute("llm.framework", "haystack")

# Retrieval span

with tracer.start_as_current_span("haystack.retrieval") as retrieval_span:

documents = pipeline.get_component("retriever").run(

query=query

)

retrieval_span.set_attribute(

"retrieval.doc_count", len(documents["documents"])

)

retrieval_span.set_attribute("retrieval.query", query)

# Prompt building span

with tracer.start_as_current_span("haystack.prompt_building") as prompt_span:

prompt = pipeline.get_component("prompt_builder").run(

query=query,

documents=documents["documents"]

)

prompt_span.set_attribute(

"prompt.length", len(prompt["prompt"])

)

# LLM generation span

with tracer.start_as_current_span("haystack.generation") as gen_span:

response = pipeline.get_component("llm").run(

prompt=prompt["prompt"]

)

if hasattr(response, "meta") and "usage" in response.meta:

usage = response.meta["usage"]

gen_span.set_attribute(

"llm.tokens.prompt",

usage.get("prompt_tokens", 0)

)

gen_span.set_attribute(

"llm.tokens.completion",

usage.get("completion_tokens", 0)

)

gen_span.set_attribute(

"llm.tokens.total",

usage.get("total_tokens", 0)

)

if hasattr(response, "meta") and "model" in response.meta:

gen_span.set_attribute(

"llm.model", response.meta["model"]

)

gen_span.set_attribute(

"llm.cost", calculate_llm_cost(response)

)

gen_span.set_attribute(

"llm.response", response["replies"][0]

)

return response

def calculate_llm_cost(response):

"""Manually calculate LLM cost"""

if not hasattr(response, "meta") or "usage" not in response.meta:

return 0

usage = response.meta["usage"]

model = response.meta.get("model", "gpt-3.5-turbo")

pricing = {

"gpt-4": {

"prompt": 0.03 / 1000,

"completion": 0.06 / 1000,

},

"gpt-4-turbo": {

"prompt": 0.01 / 1000,

"completion": 0.03 / 1000,

},

"gpt-3.5-turbo": {

"prompt": 0.0015 / 1000,

"completion": 0.002 / 1000,

},

"claude-3-opus": {

"prompt": 0.015 / 1000,

"completion": 0.075 / 1000,

},

}

model_pricing = pricing.get(model, pricing["gpt-3.5-turbo"])

prompt_tokens = usage.get("prompt_tokens", 0)

completion_tokens = usage.get("completion_tokens", 0)

return (prompt_tokens * model_pricing["prompt"]) + \

(completion_tokens * model_pricing["completion"])

# Ensure spans are flushed before process exits

import atexit

def flush_spans():

from opentelemetry import trace

provider = trace.get_tracer_provider()

if hasattr(provider, "force_flush"):

provider.force_flush()

atexit.register(flush_spans)The Challenges with Manual Setup

- Span Lifecycle Management: You must ensure spans are flushed before the Python process exits. If your script ends too fast, you lose your logs.

- Cost Calculation: You must manually implement pricing logic for every model (OpenAI, Anthropic, Google, etc.). This is error-prone and requires constant updates.

- Component Instrumentation: Haystack pipelines can have many components. Manually instrumenting each one is tedious.

- Usage Extraction: Different generators (OpenAI, Anthropic, etc.) return usage information in different formats. You must handle each case.

- LiteLLM Integration: If you use LiteLLM within Haystack (common for multi-provider support), you need separate instrumentation.

Read the manual docs: Haystack Tracing Guide

Option B: The Keywords AI Way

With Keywords AI, we've built a dedicated exporter specifically for the Haystack OpenTelemetry integration. Here's how simple it is:

Full setup guide: Haystack + Keywords AI tracing

Step 1: Install Keywords AI Tracing

pip install keywords-ai-tracingStep 2: Initialize (One Line)

# main.py

from keywords_ai_tracing import KeywordsTracer

# Initialize - everything is handled automatically

tracer = KeywordsTracer(api_key=os.getenv("KEYWORDSAI_API_KEY"))

# That's it! Haystack is now automatically instrumentedStep 3: Use Your Pipeline Normally

from haystack import Pipeline

from haystack.components.builders import PromptBuilder

from haystack.components.generators import OpenAIGenerator

# Create your pipeline as normal

pipeline = Pipeline()

pipeline.add_component(

"prompt_builder",

PromptBuilder(template="Answer: {{query}}")

)

pipeline.add_component(

"llm",

OpenAIGenerator(api_key=os.getenv("OPENAI_API_KEY"))

)

pipeline.connect("prompt_builder.prompt", "llm.prompt")

# Run it - tracing happens automatically

result = pipeline.run({"query": "What is the capital of France?"})That's it. Keywords AI automatically:

- Detects all Haystack components

- Creates spans for retrieval, prompt building, and generation

- Extracts token usage and costs (for all providers)

- Handles LiteLLM if you use it within Haystack

- Flushes spans before process termination

- Maps everything to a beautiful dashboard

Why It's Better

- Zero Configuration: No manual tracer setup, no span lifecycle management

- Automatic Cost Tracking: Supports 100+ models with up-to-date pricing

- LiteLLM Support: If you use LiteLLM within Haystack, it's automatically traced

- Component Detection: Automatically instruments all Haystack components

- Production Ready: Handles edge cases, errors, and async operations

Setup: LiteLLM Observability + OpenTelemetry

LiteLLM is a unified proxy that standardizes calls across 100+ LLM providers. It's perfect for:

- Multi-provider applications (switching between OpenAI, Anthropic, Google, etc.)

- Cost optimization (automatic fallbacks to cheaper models)

- Rate limit management

- Load balancing across providers

LiteLLM observability is crucial for understanding which models perform best and optimizing costs across providers. LiteLLM has built-in support for observability through callbacks, but integrating with OpenTelemetry requires manual work. Let's compare both approaches for implementing LiteLLM observability.

Option A: Manual OTel Setup (The Hard Way)

LiteLLM provides callback hooks that you can use to send data to OpenTelemetry. Here's the complete manual setup:

Step 1: Install Dependencies

pip install litellm opentelemetry-api opentelemetry-sdk opentelemetry-exporter-otlp-proto-httpStep 2: Create OpenTelemetry Callback

# litellm_otel_callback.py

from opentelemetry import trace

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.export import BatchSpanProcessor

from opentelemetry.exporter.otlp.proto.http.trace_exporter import OTLPSpanExporter

from opentelemetry.sdk.resources import Resource

from opentelemetry.semantic_conventions.resource import ResourceAttributes

import os

from typing import Optional, Dict, Any

from litellm import completion

# Initialize OpenTelemetry

resource = Resource.create({

ResourceAttributes.SERVICE_NAME: "litellm-proxy",

})

provider = TracerProvider(resource=resource)

trace.set_tracer_provider(provider)

exporter = OTLPSpanExporter(

endpoint="https://api.keywordsai.co/v1/traces",

headers={

"Authorization": f"Bearer {os.getenv('KEYWORDSAI_API_KEY')}",

},

)

processor = BatchSpanProcessor(exporter)

provider.add_span_processor(processor)

tracer = trace.get_tracer(__name__)

# Store active spans in a thread-safe way

from threading import local

_thread_local = local()

def get_current_span():

"""Get the current span from thread local storage"""

return getattr(_thread_local, 'span', None)

def set_current_span(span):

"""Set the current span in thread local storage"""

_thread_local.span = span

class LiteLLMOpenTelemetryCallback:

"""OpenTelemetry callback for LiteLLM"""

def __init__(self):

self.tracer = tracer

def log_success_event(

self, kwargs, response_obj, start_time, end_time

):

"""Called when a completion succeeds"""

span = get_current_span()

if span:

model = kwargs.get("model", "unknown")

span.set_attribute("llm.model", model)

span.set_attribute(

"llm.provider", self._extract_provider(model)

)

if hasattr(response_obj, "usage"):

usage = response_obj.usage

span.set_attribute(

"llm.tokens.prompt", usage.prompt_tokens

)

span.set_attribute(

"llm.tokens.completion", usage.completion_tokens

)

span.set_attribute(

"llm.tokens.total", usage.total_tokens

)

if (hasattr(response_obj, "choices")

and len(response_obj.choices) > 0):

span.set_attribute(

"llm.response",

response_obj.choices[0].message.content

)

latency_ms = (end_time - start_time) * 1000

span.set_attribute("llm.latency_ms", latency_ms)

cost = self._calculate_cost(kwargs, response_obj)

span.set_attribute("llm.cost", cost)

if "messages" in kwargs:

span.set_attribute(

"llm.messages.count", len(kwargs["messages"])

)

span.set_attribute(

"llm.messages.last",

str(kwargs["messages"][-1])

)

if "temperature" in kwargs:

span.set_attribute(

"llm.temperature", kwargs["temperature"]

)

if "max_tokens" in kwargs:

span.set_attribute(

"llm.max_tokens", kwargs["max_tokens"]

)

span.set_status(

trace.Status(trace.StatusCode.OK)

)

span.end()

set_current_span(None)

def log_failure_event(

self, kwargs, response_obj, start_time, end_time, error

):

"""Called when a completion fails"""

span = get_current_span()

if span:

span.record_exception(error)

span.set_status(

trace.Status(trace.StatusCode.ERROR, str(error))

)

span.end()

set_current_span(None)

def async_log_success_event(

self, kwargs, response_obj, start_time, end_time

):

"""Async version - same as sync"""

self.log_success_event(

kwargs, response_obj, start_time, end_time

)

def async_log_failure_event(

self, kwargs, response_obj, start_time, end_time, error

):

"""Async version - same as sync"""

self.log_failure_event(

kwargs, response_obj, start_time, end_time, error

)

def _extract_provider(self, model: str) -> str:

"""Extract provider name from model string"""

if model.startswith("gpt-") or model.startswith("o1-"):

return "openai"

elif model.startswith("claude-") or model.startswith("sonnet-"):

return "anthropic"

elif model.startswith("gemini-") or "google" in model.lower():

return "google"

elif model.startswith("llama-") or "meta" in model.lower():

return "meta"

else:

return "unknown"

def _calculate_cost(

self, kwargs: Dict, response_obj: Any

) -> float:

"""Manually calculate cost for all 100+ models"""

model = kwargs.get("model", "gpt-3.5-turbo")

if not hasattr(response_obj, "usage"):

return 0

usage = response_obj.usage

pricing = {

"gpt-4": {

"prompt": 0.03 / 1000,

"completion": 0.06 / 1000,

},

"gpt-4-turbo": {

"prompt": 0.01 / 1000,

"completion": 0.03 / 1000,

},

"gpt-3.5-turbo": {

"prompt": 0.0015 / 1000,

"completion": 0.002 / 1000,

},

"o1-preview": {

"prompt": 0.015 / 1000,

"completion": 0.06 / 1000,

},

"claude-3-opus": {

"prompt": 0.015 / 1000,

"completion": 0.075 / 1000,

},

"claude-3-sonnet": {

"prompt": 0.003 / 1000,

"completion": 0.015 / 1000,

},

"claude-3-haiku": {

"prompt": 0.00025 / 1000,

"completion": 0.00125 / 1000,

},

"gemini-pro": {

"prompt": 0.0005 / 1000,

"completion": 0.0015 / 1000,

},

# ... you need pricing for 100+ more models

}

model_pricing = pricing.get(

model, {"prompt": 0, "completion": 0}

)

return (usage.prompt_tokens * model_pricing["prompt"]) + \

(usage.completion_tokens * model_pricing["completion"])

# Create callback instance

otel_callback = LiteLLMOpenTelemetryCallback()Step 3: Use LiteLLM with Manual Span Creation

# main.py

from litellm import completion

from litellm_otel_callback import (

otel_callback, tracer, set_current_span

)

import os

def call_llm(messages, model="gpt-4"):

"""Call LiteLLM with manual OpenTelemetry instrumentation"""

# Start span manually

span = tracer.start_span("litellm.completion")

set_current_span(span)

try:

span.set_attribute("llm.framework", "litellm")

span.set_attribute("llm.model", model)

span.set_attribute("llm.messages", str(messages))

response = completion(

model=model,

messages=messages,

api_key=os.getenv("OPENAI_API_KEY"),

callbacks=[otel_callback],

)

return response

except Exception as e:

span.record_exception(e)

span.set_status(

trace.Status(trace.StatusCode.ERROR, str(e))

)

raise

finally:

if span:

set_current_span(None)

# Ensure spans are flushed

import atexit

def flush_spans():

from opentelemetry import trace

provider = trace.get_tracer_provider()

if hasattr(provider, "force_flush"):

provider.force_flush()

atexit.register(flush_spans)The Challenges with Manual Setup

- 100+ Models: You must maintain pricing for every model LiteLLM supports

- Provider-Specific Logic: Different providers return usage data in different formats

- Callback Complexity: Managing span lifecycle across sync and async calls

- Thread Safety: Ensuring spans are correctly associated with requests in multi-threaded environments

- Error Handling: Properly recording exceptions and setting span status

- Cost Calculation: Keeping pricing up-to-date as providers change rates

Option B: The Keywords AI Way

Keywords AI has native LiteLLM support. Here's how simple it is:

Full setup guide: LiteLLM + Keywords AI

Step 1: Install Keywords AI Tracing

pip install keywords-ai-tracingStep 2: Initialize (One Line)

# main.py

from keywords_ai_tracing import KeywordsTracer

from litellm import completion

# Initialize - LiteLLM is automatically instrumented

KeywordsTracer.init(api_key=os.getenv("KEYWORDSAI_API_KEY"))

# That's it!Step 3: Use LiteLLM Normally

from litellm import completion

# Use LiteLLM as normal - tracing happens automatically

response = completion(

model="gpt-4",

messages=[{"role": "user", "content": "Hello!"}],

api_key=os.getenv("OPENAI_API_KEY"),

)

# Or use any of the 100+ models

response = completion(

model="claude-3-sonnet",

messages=[{"role": "user", "content": "Hello!"}],

api_key=os.getenv("ANTHROPIC_API_KEY"),

)That's it. Keywords AI automatically:

- Detects all LiteLLM calls

- Extracts model, tokens, and usage for all 100+ supported models

- Calculates costs with up-to-date pricing

- Handles sync and async calls

- Works with LiteLLM proxy mode

- Maps everything to a unified dashboard

Why It's Better

- 100+ Models Supported: Automatic cost tracking for every model LiteLLM supports

- Zero Configuration: No callbacks, no manual span management

- Proxy Mode Support: Works seamlessly with LiteLLM proxy

- Automatic Updates: Pricing updates automatically as providers change rates

- Multi-Provider: Handles OpenAI, Anthropic, Google, Meta, and 96+ more providers

Advanced Topics: Distributed Tracing, Context Propagation, and Sampling

Once you have basic OpenTelemetry instrumentation working, you'll want to understand these advanced concepts for production deployments.

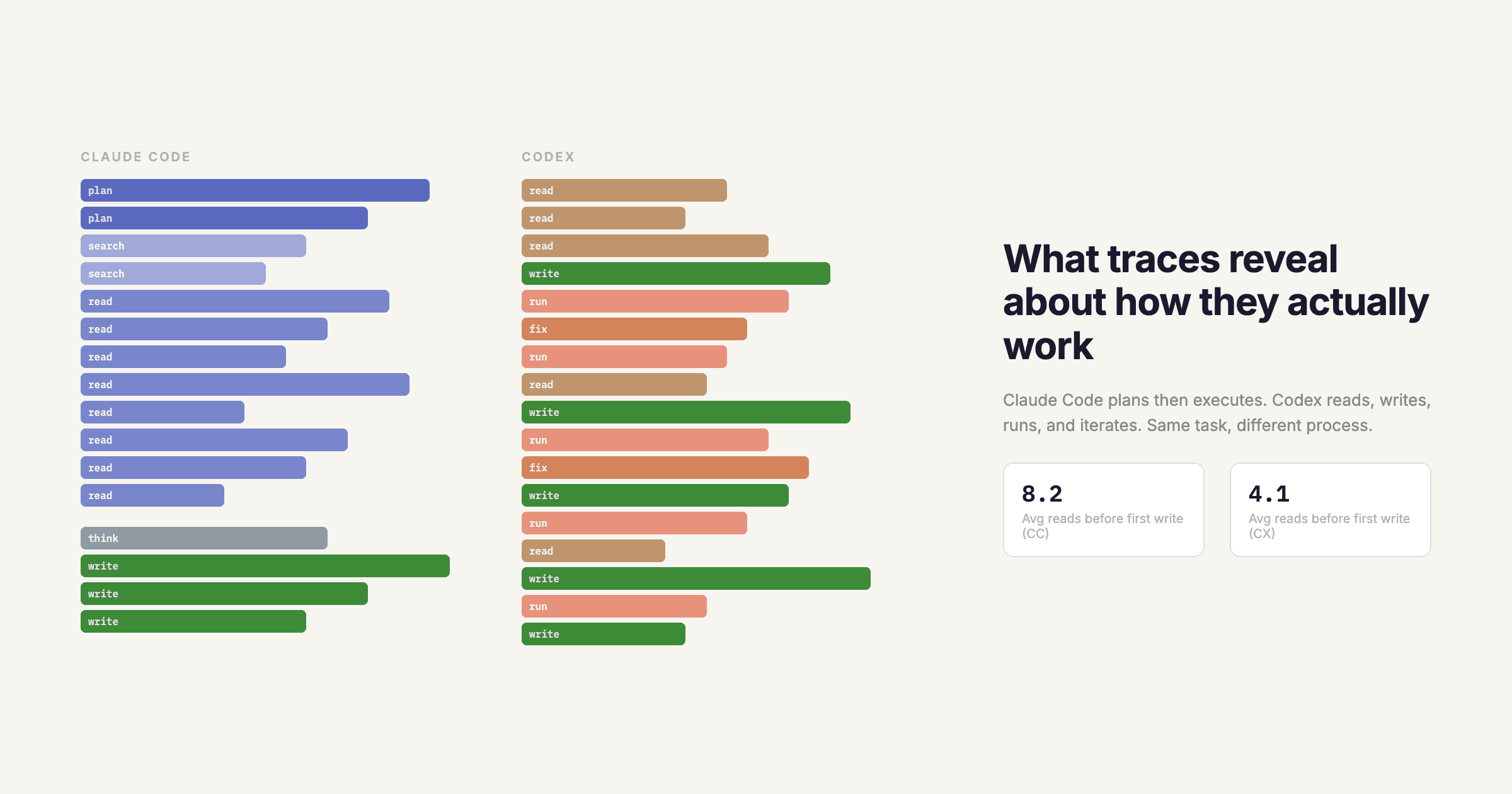

Distributed Tracing

In microservices architectures, a single user request might trigger:

- API Gateway (receives request)

- Auth Service (validates token)

- RAG Service (retrieves documents)

- LLM Service (generates response)

- Post-Processing Service (formats output)

Distributed tracing allows you to follow this request across all services. OpenTelemetry achieves this through context propagation.

How Context Propagation Works

When Service A calls Service B, it includes trace context in the HTTP headers:

# Service A

from opentelemetry import trace

from opentelemetry.propagate import inject

span = tracer.start_span("service_a.operation")

trace_context = {}

# Inject trace context into headers

inject(trace_context)

# Make HTTP request to Service B

headers = trace_context

response = requests.get("http://service-b/api", headers=headers)# Service B

from opentelemetry.propagate import extract

# Extract trace context from headers

context = extract(request.headers)

# Continue the trace

with tracer.start_as_current_span(

"service_b.operation", context=context

):

# This span will be a child of Service A's span

passContext Propagation in LLM Applications

For LLM applications, context propagation is critical when:

- Your frontend calls your backend API

- Your backend calls multiple LLM providers

- You use function calling or tool use (each tool call should be a child span)

- You have agentic loops (each iteration should be a child span)

Sampling

In high-traffic applications, tracing every request can be expensive. Sampling allows you to trace only a percentage of requests.

Head-Based Sampling

Decide whether to sample at the start of the trace:

from opentelemetry.sdk.trace import TracerProvider

from opentelemetry.sdk.trace.sampling import TraceIdRatioBased

# Sample 10% of traces

sampler = TraceIdRatioBased(0.1)

provider = TracerProvider(sampler=sampler)Tail-Based Sampling

Decide whether to keep a trace after it completes (useful for keeping error traces):

This requires a collector with tail sampling processor. Configured in collector config, not in application code.

Smart Sampling for LLMs

For LLM applications, consider:

- Always sample errors: Keep 100% of failed requests

- Sample by cost: Keep traces for expensive requests (high token usage)

- Sample by user: Keep traces for specific users (e.g., beta testers)

- Sample by model: Keep more traces for new/experimental models

Resource Attributes

Resource attributes describe the service that generated the telemetry:

from opentelemetry.sdk.resources import Resource

from opentelemetry.semantic_conventions.resource import ResourceAttributes

resource = Resource.create({

ResourceAttributes.SERVICE_NAME: "my-llm-app",

ResourceAttributes.SERVICE_VERSION: "1.2.3",

ResourceAttributes.DEPLOYMENT_ENVIRONMENT: "production",

ResourceAttributes.HOST_NAME: socket.gethostname(),

})These attributes appear on every span and help you filter traces in your backend.

Custom Attributes and Events

Beyond the standard attributes, you can add custom ones:

span = tracer.start_span("llm.completion")

span.set_attribute("llm.user_id", user_id)

span.set_attribute("llm.session_id", session_id)

span.set_attribute("llm.experiment_variant", "A") # For A/B testing

# Add events (timestamped annotations)

span.add_event("retrieval.started", {"query": query})

span.add_event("retrieval.completed", {"doc_count": 5})The Verdict: Manual vs. Automated Tracing

While manual OpenTelemetry setup is "free" (no vendor cost), the engineering hours spent maintaining it are not. Let's break down the real costs:

Time Investment

| Task | Manual OTel | Keywords AI |

|---|---|---|

| Initial Setup | 2-4 Hours | 2 Minutes |

| Cost Calculation Implementation | 4-8 Hours (for 10 models) | 0 (automatic) |

| Maintenance per SDK Update | 1-2 Hours | 0 (automatic) |

| Adding New Model Support | 30-60 Minutes per model | 0 (automatic) |

| Dashboard Setup | 2-4 Hours (Jaeger/Grafana) | 0 (included) |

| Edge Runtime Compatibility | 4-8 Hours | 0 (works out of box) |

Feature Comparison

| Feature | Manual OTel | Keywords AI |

|---|---|---|

| Setup Time | 2-4 Hours | 2 Minutes |

| Cost Tracking | Manual Calculation (error-prone) | Built-in / Automatic (100+ models) |

| Maintenance | High (Updates with every SDK change) | Zero |

| Dashboard | Requires extra tool (Jaeger/Honeycomb/Grafana) | Included |

| LLM-Specific Views | Must build custom dashboards | Pre-built (costs, tokens, latency) |

| User Analytics | Must implement custom logic | Built-in |

| Alerting | Must set up separately | Built-in |

| Prompt Management | Not included | Included |

| Multi-Provider Support | Manual implementation | Automatic (100+ providers) |

The Hidden Costs of Manual Setup

- Pricing Maintenance: LLM providers change pricing frequently. You must update your cost calculation code every time.

- SDK Compatibility: When Vercel AI SDK, Haystack, or LiteLLM release updates, your instrumentation might break.

- Edge Cases: Handling async operations, streaming, error cases, and context propagation correctly is non-trivial.

- Dashboard Development: Building useful dashboards for LLM-specific metrics (cost per user, token efficiency, etc.) takes significant time.

- Team Onboarding: New engineers must understand your custom instrumentation code.

When Manual OTel Makes Sense

Manual setup might be worth it if:

- You're building a custom observability platform

- You have strict compliance requirements that prevent using third-party services

- You're already heavily invested in a specific backend (e.g., Datadog, New Relic)

- You have a dedicated observability team

When Keywords AI Makes Sense

When choosing the best LLM observability platform for your team, Keywords AI is the better choice if:

- You want to focus on building AI features, not observability infrastructure

- You need LLM-specific analytics (costs, token usage, quality metrics)

- You want to get started quickly (2 minutes vs. 2-4 hours)

- You use multiple LLM providers and want unified observability

- You want built-in features like prompt management and user analytics

- You need an LLM observability platform that works seamlessly with Vercel AI SDK telemetry, LiteLLM observability, and Haystack pipelines

The ROI Calculation

Let's say you spend:

- 4 hours on initial setup (manual)

- 2 hours/month on maintenance

- 1 hour per new model added (10 models/year = 10 hours)

Total: 4 + (2 x 12) + 10 = 38 hours/year

At $100/hour (senior engineer rate), that's $3,800/year in engineering time.

Keywords AI pricing starts at much less than this, and you get:

- Zero maintenance

- Automatic updates

- Built-in dashboards

- LLM-specific features

- Support

The verdict: Unless you have specific requirements that prevent using a third-party service, Keywords AI provides better ROI for most teams.

Production Best Practices

Once you have OpenTelemetry instrumentation working, follow these best practices for production deployments:

1. Instrument at the Framework Level

Don't add tracing code in every function. Instead, instrument where you initialize your LLM clients:

# Good: Instrument at initialization

from keywords_ai_tracing import KeywordsTracer

KeywordsTracer.init(api_key=os.getenv("KEYWORDSAI_API_KEY"))

# Now all LLM calls are automatically traced

response = completion(model="gpt-4", messages=messages)

# Bad: Manual instrumentation everywhere

def call_llm(messages):

span = tracer.start_span("llm.call") # Don't do this

# ...2. Capture User Context

Include user IDs and session IDs in your spans to enable user-level analytics:

span.set_attribute("llm.user_id", user_id)

span.set_attribute("llm.session_id", session_id)

span.set_attribute("llm.customer_id", customer_id)3. Set Appropriate Sampling Rates

For production:

- Development: 100% sampling (see everything)

- Staging: 50% sampling

- Production: 10% sampling, but always sample errors

from opentelemetry.sdk.trace.sampling import TraceIdRatioBased

sampler = TraceIdRatioBased(0.1) # 10% sampling4. Monitor Trace Volume

High trace volume can be expensive. Monitor:

- Spans per second

- Trace size (number of spans per trace)

- Export latency

If volume is too high, adjust sampling or reduce span granularity.

5. Use Semantic Conventions

Follow OpenTelemetry's semantic conventions for attribute names:

# Good: Use semantic conventions

span.set_attribute("llm.model", "gpt-4")

span.set_attribute("llm.tokens.prompt", 150)

span.set_attribute("http.method", "POST")

# Bad: Custom attribute names

span.set_attribute("model_name", "gpt-4") # Inconsistent6. Handle Errors Gracefully

Always record exceptions and set span status:

try:

response = completion(model="gpt-4", messages=messages)

span.set_status(trace.Status(trace.StatusCode.OK))

except Exception as e:

span.record_exception(e)

span.set_status(

trace.Status(trace.StatusCode.ERROR, str(e))

)

raise7. Set Up Alerts

Configure alerts for:

- Cost spikes: Unexpected increases in LLM spending

- Latency degradation: p95 latency above threshold

- Error rate increases: More than X% of requests failing

- Token usage anomalies: Unusual token consumption patterns

8. Review Traces Regularly

Don't just collect traces—use them:

- Weekly reviews: Identify optimization opportunities

- Post-incident analysis: Understand what went wrong

- Cost optimization: Find expensive operations to optimize

- Quality monitoring: Track response quality over time

9. Version Your Instrumentation

When you update your instrumentation code, include version information:

resource = Resource.create({

ResourceAttributes.SERVICE_VERSION: "1.2.3",

"instrumentation.version": "2.0.0",

})This helps you correlate issues with code changes.

10. Test Your Instrumentation

Don't assume your instrumentation works. Test it:

- In development with 100% sampling

- With error cases (timeouts, rate limits, invalid inputs)

- With high load (ensure it doesn't impact performance)

- With different models (verify cost calculation is correct)

Conclusion

OpenTelemetry is the industry standard for LLM observability, and it's essential for production LLM applications. As an LLM monitoring open source solution, OpenTelemetry provides the foundation for comprehensive observability. Whether you're using Haystack for RAG pipelines, implementing Vercel AI SDK telemetry for Next.js apps, or setting up LiteLLM observability for multi-provider setups, OpenTelemetry provides a unified way to observe your applications.

The choice between manual setup and choosing the best LLM observability platform comes down to:

- Manual OTel: More control, but significant engineering investment. You'll need to build custom dashboards and maintain pricing tables for all models.

- Best LLM Observability Platform (Keywords AI): Faster setup, zero maintenance, LLM-specific features. An LLM observability platform that handles everything automatically.

For most teams building production LLM applications, choosing the best LLM observability platform provides better ROI. With Keywords AI, you get production-ready LLM observability in 2 minutes instead of 2-4 hours, automatic cost tracking for 100+ models, and built-in dashboards optimized for LLM workflows. Whether you need Vercel AI SDK telemetry, LiteLLM observability, or Haystack instrumentation, the right LLM observability platform will save you hundreds of engineering hours.

Ready to see your traces?

Get started with Keywords AI in 60 seconds and start instrumenting your LLM applications today. Focus on building great AI features, not observability infrastructure.